This feature helps in checking papers in a different language. AI paper graders can scan content in multiple languages.An AI paper checker can also grade manuscripts of research papers or essays because it has an optical character recognition facility.The continuous learning of AI paper graders improves the grading technique employed by the software. The paper grader's ability to adjust its grading settings by scanning human graded papers ensures that the grading process doesn't become outmoded. The grading feature of an AI tool updates itself by absorbing new information.Some of these attributes are listed below: Apart from this prominent aspect, other features of an AI grading tool make it highly useful. The Valuable Attributes Of AI Paper CheckersĪI paper checkers decrease the load on teachers by enabling automatic grading. The swift grading process of an AI checker enables the bulk grading of papers. There is a negligible difference between the grades assigned by an AI paper checker and a human being. Machine learning, combined with Artificial Intelligence, provides the paper grader with the ability to automatically assign grades to papers. The software learns to replicate the grading process used by human beings for grading papers. The graded papers provide data that initiates a process of learning. AI Grade Calculators Grade Assignments In Bulk With Accuracy And Within SecondsĪI software can learn from the available data. This manual grading information is stored by the software system for updating its grading metrics. The AI grading software gathers the metrics for grading assignments from papers that have been graded by teachers/professors. The AI software combines human understanding with machine learning. AI paper grader is a highly efficient software that quickly grades research papers and student assignments within no time. The use of Artificial Intelligence technology has revolutionized the development of software services. In simple terms, the paper grader is a software solution that helps assign appropriate grades to student papers. Moreover, the scores are always accurate for each human being. Bulk grading of assignments within a short span is possible due to the AI grading of papers.

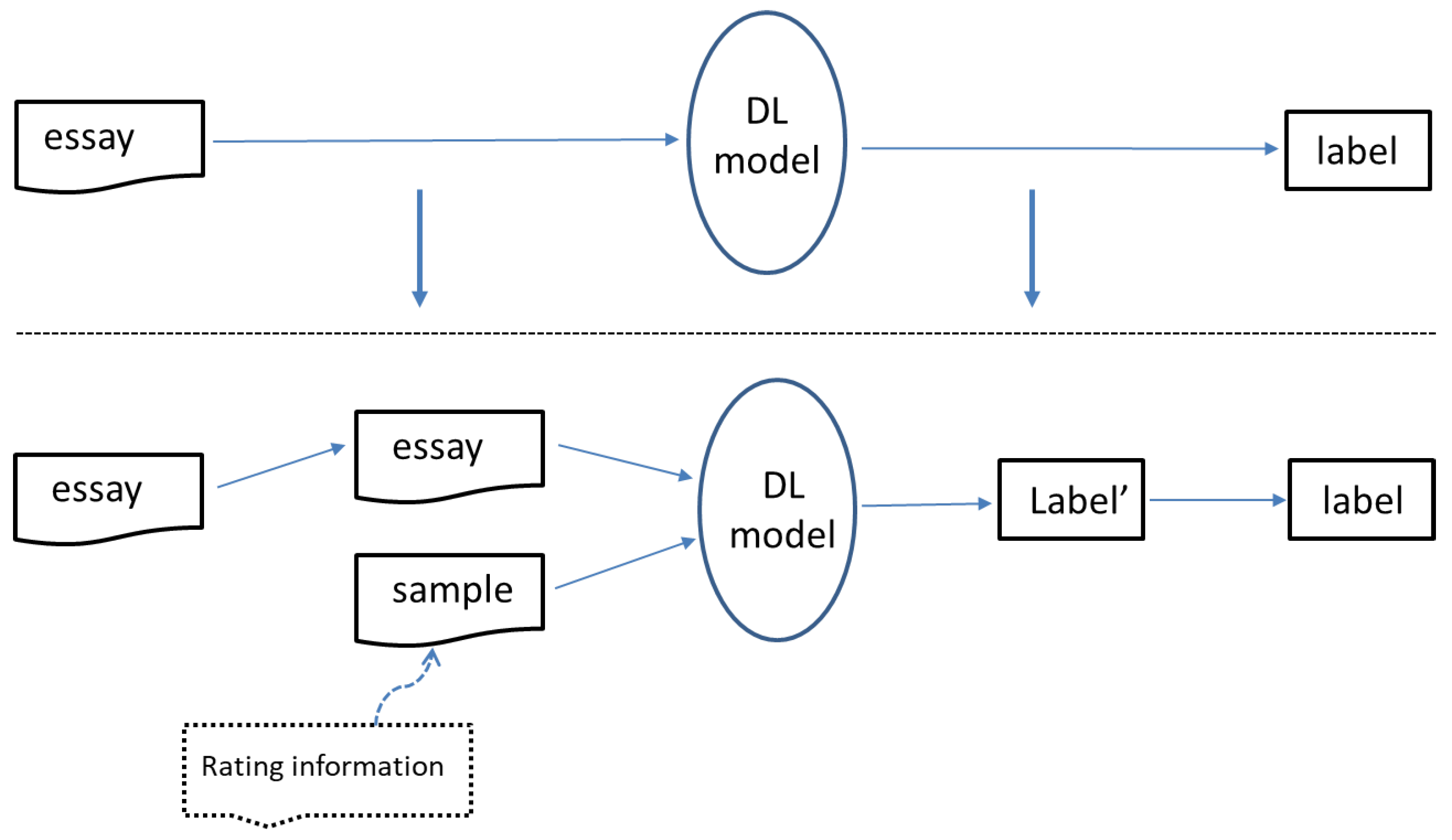

The process of automatic grading doesn't only save precious time but also provides dependable results. Software developers have created a novel solution in an automatic paper grader form to ensure that the quality of grading assignments is not lowered at any cost. However, this process is too time-consuming, and more than one teacher is required to grade a single paper. This manual method is well-suited for ensuring a transparent process of grading assignments. The average of the grades given by different teachers is assigned to the paper to ensure a proper gradation. Their grades are compared to ascertain the difference. Two teachers often check each assignment to keep the grading method free from any bias. The reporting results provide directions to the researchers in the field to use manually extracted features along with deep encoded features for developing a more reliable AES model.Manual Grading Often Needs Two People’s Intervention A combination of 30-manually extracted features, 300-word2vec representation, and 768-word embedding features using BERT model results up to 77.2 ± 1.7 of Kappa statistics for rescaled regression problem and 75.2 ± 1.0 of accuracy value for Quantized Classification problem using a benchmark dataset consisting of about 12,000 essays divided into eight groups. We compared them against the existing ensemble approaches in terms of Kappa Statistics and Accuracy for rescaled regression problem and quantized classification problem respectively. We analyzed the performance of AES models for different combinations. We formulate an automated essay scoring problem as a rescaled regression problem and quantized classification problem. We use 30-manually extracted features, 300-word2vec representation, and 768-word embedding features using BERT model and forms different combinations for evaluating the performance of AES models. In this work, we present a comparative empirical analysis of Automatic Essay Scoring (AES) models based on combinations of various feature sets. The significant challenges include the length of the essay, the presence of spelling mistakes affecting the quality of the essay and representing essay in terms of relevant features for the efficient scoring of essays. Abstract: Automated Essay Scoring (AES) is one of the most challenging problems in Natural Language Processing (NLP).

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed